The release of generative artificial intelligence (AI) platforms, such as Midjourney and DALL-E 2, to the public has created a new societal challenge which we must address: How do we compensate people for their work, or for their assets, that has been used to train generative AI platforms? In the case of image generation, these platforms have been trained using large data sets of existing images, including those of artwork produced by living artists. Generative chat platforms are being trained with data pulled from a multitude of written sources, generative music systems with published music, and targeted advertising systems trained with social media usage data. Should artists be compensated when images are generated in their style, should writers and musicians be similarly compensated, and should individuals be compensated when someone benefits from their personal data? I believe yes.

Example: AI Generated Art “in the Style of”

Let’s start with the “sexy” example, generated images. Following are four pictures:

- Johannes Vermeer‘s Girl with a Pearl Earring.

- A DALL-E 2 generated image of a sea otter in the style of Vermeer, which at the time of this writing comes up as an example when working with the platform.

- A comic panel drawn by John Byrne, my favourite comic artist, of The Fantastic Four fighting Galactus.

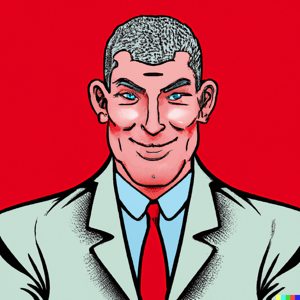

- A DALL-E 2 generated image of a manager in the style of John Byrne that I generated using the platform.

The issue is that the DALL-E images are generated in the style of an artist, and as a result are not direct copyright infringements. This is clearly true of the Vermeer-based image because his work is in the public domain. But what about the Byrne-inspired image? Byrne’s work is still covered by copyright law, yet some people argue that this is ok because current intellectual property (IP) – copyright, trademark, and patent – law doesn’t address “in the style of” issues. The response to this claim is that when current IP law was developed generative AI didn’t exist, so the writers of the law didn’t think to cover it. Now that we find ourselves in new territory we need to revisit existing legal frameworks. Fans of John Byrne, and even many non-fans, can see a comic panel and instantly recognize it as his work. The same can be said for many other artists. This is because artists spend years honing their craft, and after significant effort will develop a style that they are known for and more importantly get paid for. Then along comes generative AI systems, which in minutes process images of their work that they spent decades creating. If someone can own the rights to a piece of artwork, shouldn’t the artist own the rights to their artworking? But, of course, it’s not this simple. The generated image of the manager reflects the cumulative work of many people, not just John Byrne. And certainly the artists whose work went into training the AI have far more claim to ownership than I do, even though I was the one who wrote the description and paid to have it generated. Yet there is also skill in using these new AI tools effectively (skill that I clearly don’t have), so AI artists arguably also have an ownership stake in what they create.

Example: Personal Data in Large AI Models

For the sake of this discussion personal data includes both data about a person, such as their name, as well as data generated by them, such as their internet browsing history. All types of personal data are being used to train large models due to the easy availability of such “big data,” often going back years. There are many potential applications for your personal data:

- Targeted advertising. Advertisers are not only using your personal data to identify products and services that you may be interested in, they’re combining that with personal data from your connections too.

- Process improvement. Companies are using the data that they have about you, or are able to glean from other sources, to improve their own ways of working. This includes but isn’t limited to training and evolving their AI models.

- Healthcare. Organizations are combining healthcare data, data from wearables, and shopping data to help them to hone their healthcare offerings.

- Driving recommendations. Products such as Waze combine real-time and historical data from millions of drivers to provide real-time advice to drivers regarding the best route to get them to their destination.

All of these applications, and more, provide value to you as an individual or at least have the potential to do so. But there is a saying in the software world: If you’re not paying for a product then you are the product. What is meant by that is that nothing is truly free. If you’re not paying money to use an app then the organization that offers it is monetizing the data that you are providing to it. People will often unknowingly sign away the rights to their personal data, including usage data, via the license agreements they’re forced to sign when they first start working with an application or platform. Sometimes that data is being used to train the large models of those companies, sometimes it’s being sold to other organizations that are doing so. Is the free usage of these applications sufficient compensation for your personal data, or perhaps you should also receive monetary compensation as well?

One potential solution is the use of personal data services that provide people with the ability to maintain and control their own information rather than entrust it to various corporations. More on this in future blogs.

Implications

I believe that there are several critical societal implications:

- We need to recognize that the genie is out of the bottle. What we’re seeing with visual artwork and writing is only the beginning. The generated work will just get better over time as the AIs improve. We will also see AIs applied in a wider range of professions, for example we’re already seeing expansion into music (i.e. OpenAI’s Jukebox and Shutterstock’s Amper). How long will it be until your profession is targeted?

- We need to recognize that people own the rights to both their work as well as their way of working (their style). Just like someone should be able to sell the rights to their work to someone they should also be able to sell the rights to style to someone.

- We need to develop mechanisms so that people can be compensated for their style. One bright light is Shutterstock, who has developed a fund for artists whose works have contributed to their generative AI offering. This strategy appears similar to the strategy used by Spotify to pay musicians for their work, and I suspect it will take a year or more to determine how well the Shutterstock approach works and where it needs to be adjusted.

- We need to evolve our legal frameworks. This is twofold. First, we need to extend copyright laws to address ownership ways of working (WoW)/style in addition to ownership of the work itself. Second, we need stronger privacy laws to address personal data privacy. This is ongoing work, with the EU’s GDPR one example of such.

Parting Thoughts

To paraphrase Martin Neimoeller:

First the AIs came for the artists, and I did not speak out – because I was not an artist.

Then the AIs came for the writers, and I did not speak out – because I was not a writer.

Then the AIs came for the musicians, and I did not speak out – because I was not a musician.

Then the AIs came for me – and there was no one left to speak for me.

To learn more about the ongoing work around AI Ethics, the following pages are great reads:

- Artificial Intelligence at Google: Our Principles

- European Network of Human-Centered Artificial Intelligence

- UNESCO’s Recommendation on the Ethics of Artificial Intelligence

Related:

4 Comments

Howard Podeswa

I’m both a professional artist and IT professional so this touches me in a lot of ways. First off-the-cuff thought: the issue of who owns my style is not new to AI. If a human artist paints a work in my style does this infringe on my legal rights? It’s a complicated question because a solid yes would mean that a lot of historical art which was itself strongly ‘inspired by’ part artworks would be rejected. That includes me, Picasso, Sargent and many other artists who have created paintings that borrow heavily from Velasquez’s las meninas – as well as every artist who starts out basing their work in the style of other artists they admire. But when you see an outright steal – it hurts and feels unfair and AI will only amplify that. I don’t know what the answer is but I think that if we already have an answer for the human case we should be able to apply it to the AI context

Scott Ambler

Howard, you’re right, this isn’t new. What is new though is the scale. Now that these generative AI systems are available to the public we’re seeing millions of people potentially infringing on the rights of creatives. Before this, I would have had no hope of drawing someone sitting at a desk in anything resembling the art work of an existing artist. Frankly I would have struggled drawing a stick figure doing so. Now, with a bit of typing, I can create images in the style of any artist that I choose. Granted, DALL-E is currently a bit rough, but it will only get better. And quickly. Other platforms, such as Midjourney, are incredible for certain types of art. And they will only get better too.

I don’t know how it’s going to play out, but I do know that it will get out of hand long before there are updates to appropriate legal frameworks. I also know that there are many artists, most of whom were already struggling to make a living at their craft, who are seeing this as the death knell of their chosen career. Musicians and writers are not far behind the artists.

Indubala Kachhawa

Hello Scott, I am a writer, majoring n creative/poetry writing and I indeed feel my job attacked by the recent AI bot, chat GPT.

chat GPT can craft a poem/essay in just a minute, How does one get to know if some content is an authentic creation by the author or a bot-generated piece?

This is scary. Machines took over IQ a few decades back and today machines are taking us over on EQ(emotional quotient) too.

AI which promised to revolutionize the world is surely replacing by replacing humans and ruling the only denominating factor, the emotional quotient, the ability to think and process emotions.

I agree with you that there must be a framework, legal or whatever for a Responsible AI mindset for the genie which is now out of the bottle as you put it.

I understand that technological advancement and change are inevitable.

My question is are the big tech/hi-tech giants coming up with a plan for Responsible AI kind of thing? Or on the lines you mention for copyright laws.

Scott Ambler

Yes, the tech giants are in fact working on responsible AI and there are several movements to that effect. So are several governments. The challenge is that the technology is evolving swiftly and we’re still in the early days of figuring out the implications of it. Regulations will certainly lag behind as they always have.

In short, expect some short term pain that may or may not be alleviated at some point in the future.